This just in: do not turn on your unlit gas stove inside an airtight kitchen sealed with tensioned 4-6 mil ZipWall plastic sheets secured floor-to-ceiling with foam bars, painting tap, and sandbags.

— Iowahawk reviews the scienceFrieren 2, episode 5

In which Frieren does the meme, and then gets sentenced to 300 years hard labor.

I’m guessing there’s an AI behind this…

Dear Apple,

A while back I bought the recently-updated AirPods 3 Pro. They worked fine with my phone, tablet, and laptop, but Apple’s “find my” service couldn’t see them. At the time, the explanation was that I hadn’t yet upgraded my devices to the “Liquid Ass” OS v26. Since I wasn’t traveling much (indeed, I often don’t leave the house for days, especially the way this winter has been going…), I didn’t worry about it.

Now that they have a mostly working 26.3 release, and they’ve added back some of the legibility that was abandoned in the pursuit of random UI restyling, I updated my phone and tablet (not the Mac; that needs to work). To no surprise, the AirPods did not magically start working correctly in “find my”; they were detected, but it insisted that setup was incomplete and they could not be located.

Clicking on the error message took me to a support page, where none of this worked. At all. There’s yet another screen where you have to completely disassociate the device from your Apple account and then reconnect.

(flux2 is a lot slower and more memory-intensive than Z-Image Turbo, but it’s better at text, styles, and prompt following; there’s a lot I can’t do with it because my graphics card only has 24 GB of VRAM, but for simple one-off pics without refining, upscaling, or multi-image editing, it’s great; I’m thinking about setting up SwarmUI on Runpod with a better card, for the times when I want precise prompting)

Amazon Japan gets clean

Unless you have an active VPN connection with Japanese servers, you can no longer view adult books and videos on Amazon Japan. Searches pretend there’s nothing there, and direct links to products throw up an error page as if the page doesn’t exist. Items in wishlists appear normally with their title and picture, but you can’t click on them or move them to your cart.

(the accurately-rendered poster titles come from the collection of dirty-book covers I acquired some years back as part of my collage-wallpaper project)

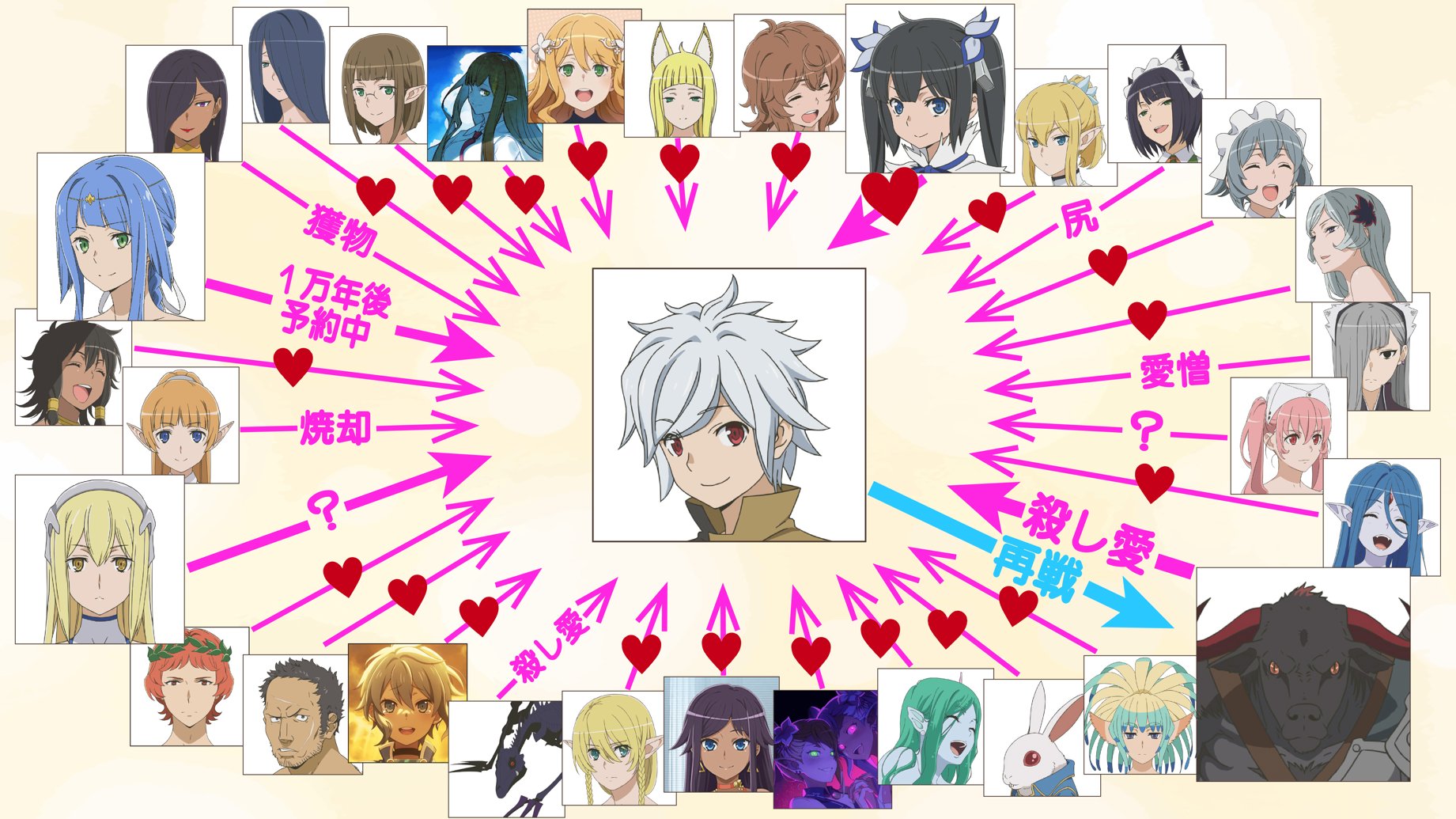

How to spot the hero in a harem anime…

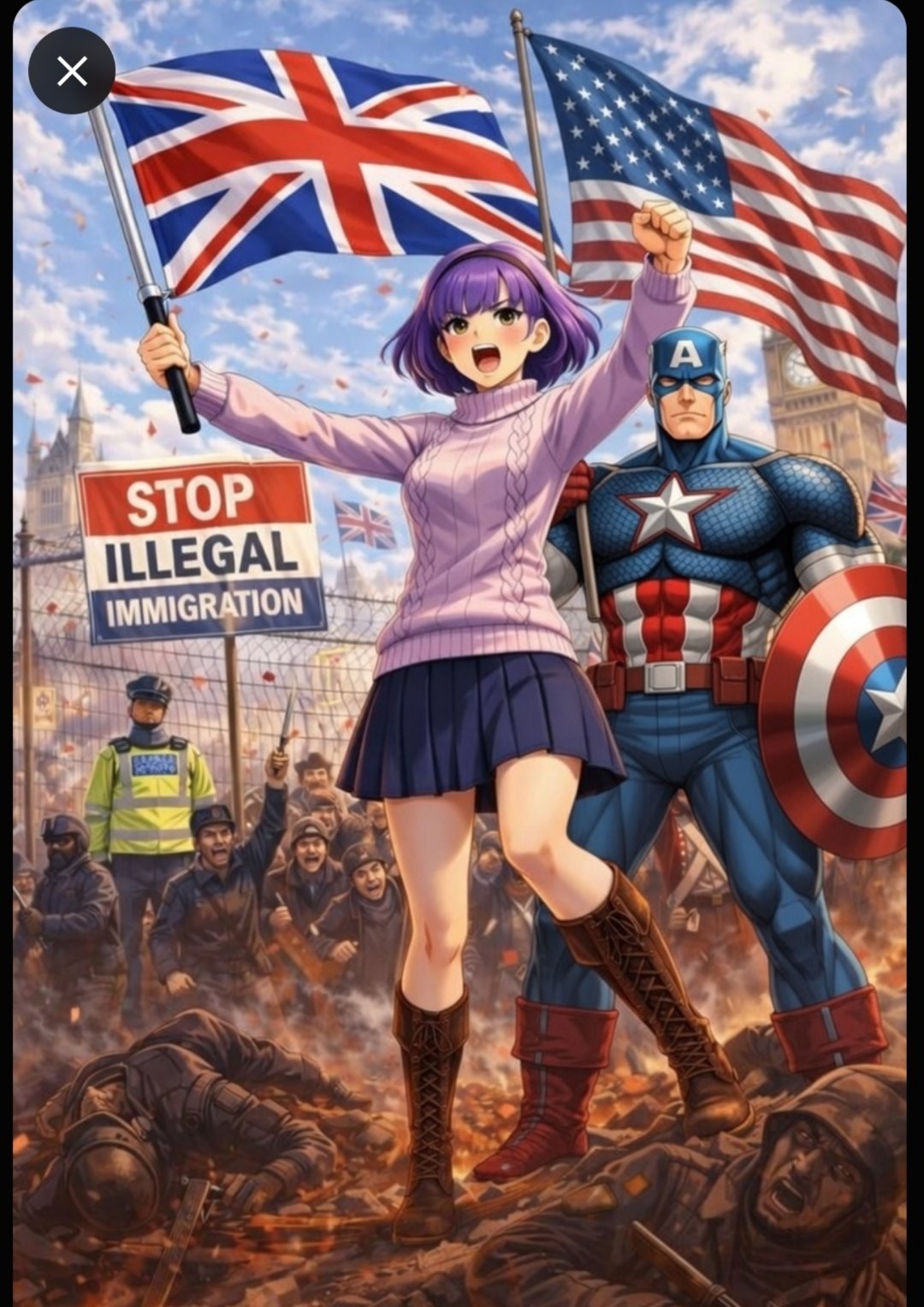

“Well, bye”

[Update! Our Wholesome Choirboy doesn't want to go back to Ireland because... he fled the country for dealing drugs and obstructing police].

[Updated update! He also abandoned two small children when he became a fugitive; all we need now is for him to have set fire to a police car, and he'll be a proper Democrat]

Insty links to an idiotic tweet by no-borders-for-you libertarian Nick Gillespie, who seems to have expected that MAGA would repent of its sinful ICE-worship upon seeing a sympathetic Irish, white illegal. To no one’s surprise except perhaps Gillespie’s, only the usual suspects answered the call.

Perhaps because it was quickly pointed out that Our Not-So-Diverse Hero overstayed a tourist visa for over fifteen years before marrying a citizen in 2025 in an attempt to dodge deportation. Gillespie also fails to mention that he’s only being held in detention because he refused cash and a free trip back to Ireland. Also, how was Our Upright Immigrant making money without valid ID all those years?

(from the Cherish The Ladies album of the same name; I like the song, but the message falls apart when you actually think about the lyrics…)

(side note: Cherish The Ladies ended up suing their record label for unpaid royalties…)

From the X files...

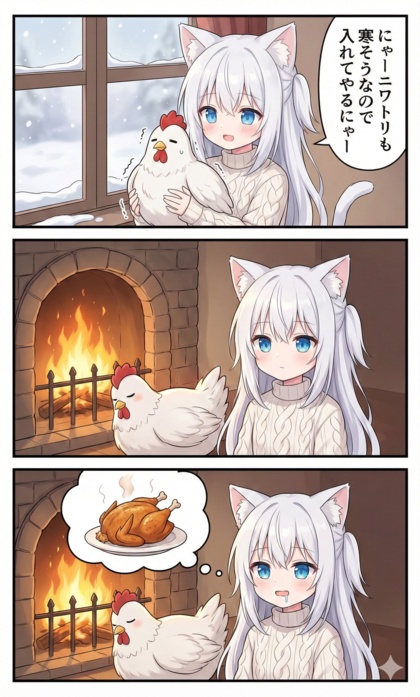

If you’re cold, they’re cold…

Saki is politely baffled

Eight years ago, I blogged this picture:

This week, it appeared on xTwitter again in a new context, and she linked to it, asking “WTF?”.

A number of people made an attempt to unpack the layers of bullshit, but I don’t think they were successful. What I found most interesting about wading through it all was that not a single person ever brought up the little joke she was making in the original picture. They were too busy grinding axes for one side or the other.

Perspective, forced

Choose wisely

(pity they all look like mass-produced emotionless fembots fresh off the assembly line…)

“Go Fish yourself”

(not sure how I feel about someone hijacking the Amelia meme to build their social-media engagement; at least it’s not some Leftist weenie trying to subvert it)

Not every baffling picture is AI…

(amusingly, when I used several edit models to try to restore and colorize this photo, they all gave Godzilla a dog head unless I specifically called it out as “a picture of Godzilla”; so, yeah, beware invented details in “AI-enhanced” photos)

Days Of Future Past

「記憶を残したまま小学生(何年生でもいい)に戻れる」

My translation:

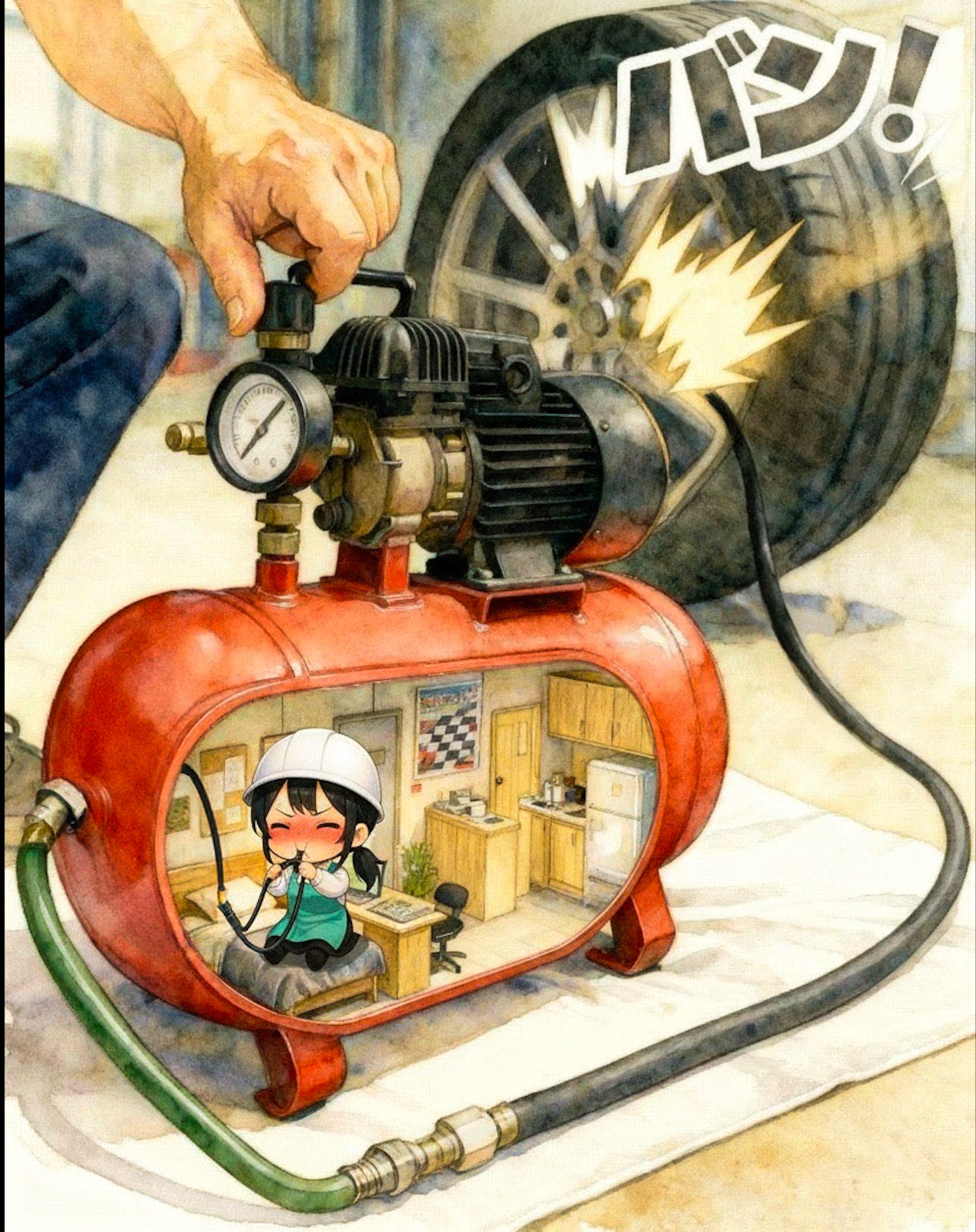

(had to go all the way up to Flux.2-Dev to get the text to render accurately, which took 3 minutes to render; Klein and Qwen Image Edit’s text rendering ranged from “passable with terrible spelling” to “complete gibberish inked in a random font”)

Frieren 2, episode 4

This week, it’s the thought that counts, especially when you’re a pair of socially-awkward teens fumbling through their first date. Much easier to fight ogres. Or Pokemon.

(fan-artists frequently draw Fern much bustier than she is in the anime, while Nagi is pretty much spot-on)

Underground Healer, anime and novel

Since I’m only watching Frieren this season, I cast back looking for recommendations of completed series, and found a lot of positive commentary for this one. I’m watching about an episode-and-a-half a day while on the elliptical, but it’s interesting enough that I picked up the first few translated light novels.

And then I hit this bullshit in book 3:

“You clap at us, we clap back harder.”

The speaker is one of the three slum-gang boss hotties, squaring off with a bruiser from the Black Guild who’s smashing up their festival. She says this as their argument escalates into a full-scale gang battle.

This is not the first line that didn’t ring true, but it’s easily the worst.

Not only does the slang instantly date itself, it makes no fucking sense in the scene. It’s like a mafia don getting uptwinkles from his lieutenants.

What could possibly go wrong?

Apple is allowing third-party AI apps in CarPlay. The jokes just write themselves…

I’m okay with it this time…

I put in an Amazon order Thursday night that promised delivery between 7AM - 11 AM Friday. Sometime before that window opened, the “clear skies” forecast proved wrong, and 4 inches of fresh snow was dumped over the course of several hours. It didn’t stop until after noon, and the trucks didn’t come out to clear the roads until evening.

I cleared a path to the street and salted it, but I didn’t actually expect the package to show up until Saturday, which it did.

A quick Amelia...

I knocked together a quick prompt based on the Last Rose of Albion version.

Original Amelia wore a darker jacket:

After my earlier text-rendering adventures, it didn’t surprise me that Z-Image Turbo couldn’t render “whoso” (whoro, whojo, whoyo, whobo, …), but I was annoyed that it kept changing “hammer” to “hommer”, and I could see it start off right and then mis-correct it; also, more often than not, the sword was not in the stone.

The new hotness is rendering with ZIT and then doing style edits with Flux.2 Klein; this is easy to set up in SwarmUI thanks to Juan’s Base2Edit extension. Same pic, with style set to “colored-pencil sketch with precise linework”:

Upcoming anime

Setting aside the sequels to shows I never watched or have abandoned, Spring is not looking great, but summer has some promise:

-

Spring: Farming Life In Another World 2: still hoping they stop being coy about how thoroughly the divine farming tool plows the fields. The first trailer shows off plenty of pretty gals, at least, so it promises to be as decorative as the first season.

-

Summer: The World’s Strongest Rearguard: please-don’t-suck-please-don’t-suck. Still no video preview, but the initial casting is up, and Our Upright Ass-Guardian is voiced by The Universal Boy Hero, Mute Lizard Best Girl is Komi, Sexy Former Manager’s biggest roles have all been porn, Cursed Swordsgirl is perhaps best known for voicing 2B, and Jailbait Shrine Maiden is Yor (aka Visha, Yun-hua, Ryu, Angeline, etc). And both the light novels and manga are resuming after a lengthy hiatus.

-

Summer: Bumpkin & Harem 2: yes. From what I know, the gals never actually become haremettes, despite Red showing signs of jealousy. I would like to see Age-Appropriate Hot Magic Teacher emerge as the leading candidate for wifehood, but White is determined.

-

Summer: Skeleton Knight 2: yes, please.

-

Summer: Magilumiere 2: yes-yes-yes!

Mini-gyoza!

I’m really looking forward to spending a few weeks in Japan soon. Osaka-style hitokuchi (one-bite) gyoza will be on the menu, but not quite this tiny. It’s going to be a mostly-Kyoto trip, but thanks to my sister having to leave early, I’ll have two-and-a-half days on my own in Tokyo.

Frieren 2, episode 3

Not the usual hot-springs episode. Or the usual first-date episode. As usual for Frieren, it’s the journey that matters.

Related,

I would not have guessed that Himmel had the same voice actor as Bakugo and Accelerator. I learned this after viewing a short clip from the Japanese-dubbed Chinese animation series “The Last Summoner” and finding the voices of hero and heroine kinda familiar.

It’s a shouty, tropey Boy-Meets-Goddess show where she spends most of her time as a bratty shoulder chibi, because full-figured-full-power form is too expensive to maintain, and any power use at all requires stuffing her face. Y’know, a Completely Original Story™.

The punchline is that her voice actress is Frieren.

(Anime News Network has no record of this show, so off to MyAnimeList; licensed by Crunchyroll, by the way, who lists it as two seasons, but it’s just Chinese vs Japanese audio)

Unrelated,

Chaney’s latest series “Accidental Astronaut” is called that because…

…“Flash Gordon” was taken.

Z Image Base knows ray-guns and retro, but the name “Flash” is rather heavily trained on the wrong character. It does get some things right, though:

MZ4250 pulls a Penzeys

The creator of a large collection of excellent 3D models for gaming miniatures just announced on his Patreon that he’s got severe IDS (ICE Derangement Syndrome), and has invited people who disagree with him to stop supporting him financially.

His proposal is acceptable.

Status

But first, a little post-Christmas cheer

Part 2 of the English version of Chiharu’s Christmas story.

Home again warm again

The furnace guys came out Monday morning, determined that it was toast, and scheduled the installation of a new one for Tuesday morning.

Which meant I spent Monday afternoon enlarging the path to the street into something wide enough to get my car out (and their truck in). I only went as far as Home Depot to pick up two space heaters, which they still had a few of. I only really needed them for one day, but I can always put one in the garage to keep it above freezing out there, and the other one will be useful occasionally.

I also bought some softener salt, and the cashier was concerned that I might try to use it as ice melt, which everyone is completely out of. I reassured her that it would only go into the water softener, but didn’t bother explaining that it’s going to stay in the trunk of the car for a few days to put some weight over the rear tires for traction.

Tuesday morning, I was spreading some of my remaining salt on the driveway to ensure there weren’t any icy spots for the furnace guys, and they showed up just as I finished. Start to finish, it took them four hours, which left about two hours for the house to warm back up 15 degrees before I picked my sister up at the airport.

I’m not going to try to clear the rest of the driveway today, just widen the lane a bit and scrape up whatever the wind has blown back over the previous work. I’m much too sore to do another whole 75-foot-long lane, and it’s too cold out there.

And add more salt. Which I now have plenty of, after finding one brand in stock at one store (Lowes). I’m storing it in the trunk of my car for extra traction.

(the shiny new Z Image base model is primarily designed to be used to create fine-tuned models, so it’s difficult to use directly, and can be disappointing compared to the effortless goodness of the Turbo model)