Cheesecake

Cheesecake Vault, January 2019

First pass through this month’s archives knocked out 3/4 of the pics; eliminating another 2/3 in the second pass brought it down to a reasonable size.

Waifu Drop

I may try to watch “stuck at level 1 with amazing loot rolls” for minor amusement, mostly for the bunny-suited bunnygirl. Other than that, the primary (ludicrous) twist on the usual isekai formula is not how Our Hero exploits gamelike abilities in a generic fantasy world, it’s the fact that everything in that world comes directly from dungeon drops. Including the air they breathe.

Between this and Vending Machine, I may laugh this season. I may also laugh at Atelier Thighza if the fan-service in the actual show is as over-the-stocking-top as the trailer; I’ll find out Saturday.

(unrelated bunnygirl is unrelated, due to the near-total lack of fan-art of this series that has 7 novels and 10 manga volumes…)

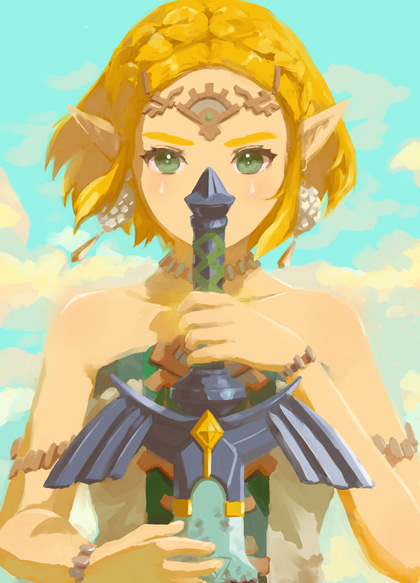

TotK Hylian Shield

Forget the clickbait articles (and there are many, most filled with obnoxious ads and dubious Javascript) telling you how to get to the Hylian Shield early in the game. You don’t need to eat a ton of stamina boosts and glide to the Serutabomac shrine, and you don’t need to go in through the Docks and avoid/fight Baby’s First Gloom Hands.

Enter from the ground, work your way around the spoiler under the castle toward the Northeast corner until you reach a section of wall due south of the top of the waterfall, and look for a cobblestone path leading directly into the spoiler, at (-0137, 1089, 0079). If you walk off the ledge at that location, there’s a cave entrance directly behind you, and you can just walk down a short flight of stairs, light the bonfire, and grab the shield.

If you already visited the shrine, you can also easily glide to this location by going around to the back and jumping off the southeast corner.

Now, if you want to get the Master Sword early, you’ll need two full stamina wheels, a wing with two fans (and maybe a battery if you don’t have much power, or a nice rocket shield), and a whole lot of patience, because the best way to reach it is to go to the southern tip of the island you woke up on and look to the east. The spoiler will first appear to the right of Zonaite Forge Island, moving left. It will make a 180° turn, and as soon as you have a full starboard broadside view, launch your wing and head for the nearest point. Once you’re on board, pick up a few souvenirs as you head to the front, and then draw the sword.

You might have to wait as long as two full hours of real not-in-a-menu play time for it to appear, so bring along a good book.

(or a bad book, I won’t judge you…)

Stupid Moderator Tricks

“Hey, if we mark ‘our’ subreddit NSFW, those assholes can’t sell ad space, and we can go back to business as usual! Of course, we’re still blocking search engine traffic, and now we’re keeping kids from asking questions about the most popular Nintendo Switch game of the year, but you’ve got to break a few omelots to lay an egg!”

What could possibly go wrong?

Bikinichain beats blockchain

Pixiv: girls with guns

Nothing says sexy like a woman packing a pair of 44’s… and a gun.

Summer anime

Holding at two shows to watch: Ryza and Vending Machine.

(amusingly, the official abbreviation of the typically ridiculously long isekai title is jihanki)

Unrelated, I have my differences with the NRA, but…

…I am now a certified Range Safety Officer.

Gun-service time!

Surprisingly SFW set; I guess the folks on Pixiv have trouble tagging guns when there are nipples on display.

Cheesecake Vault, December 2018

I think I’m getting better at whittling down the archives. Or at least quicker…

Where have you been?

I just received a package that Amazon sent to me in January (and quickly declared lost and replaced). Via USPS, of course.

(it had a note scrawled on the side “6.15 postage due”; I don’t know who they expect to collect that from)

Anker Man

Anker, maker of devices that transform AC current into USB charging, released a… Transformers tie-in model. Five months ago, but Amazon didn’t recommend it to me until today. Not that I’m in the market for one.

Custom kydex that isn’t

I’m not going to name-and-shame the vendor, yet, but I went looking for a good OWB mag carrier for a Ruger LCP Max, found a company in Texas that had a nice-looking product and advertised compatibility, and received something that was not only not left-handed, but that was so oversized that you could drop the mag in and rattle it around.

Turns out they didn’t have a sample in stock to form the kydex around, and guessed that it must be about the size of a Sig P365. But they didn’t have a sample for the .380 version of the P365, so they used the 9mm mag. And just in case it was a little too big, they included a replacement washer to narrow it down.

Closest thing I’ve got that fits in it is a .40 CZ-75B mag. I sent pictures off to the vendor, talked to them on the phone, and they said they’d stop at the nearby Cabelas, pick up the right mag, and make a replacement (properly left-handed). Even if the new one is correct, I definitely won’t order from them again, because if I wanted something generic that might kinda fit if you tweak it, I wouldn’t have ordered model-specific custom kydex.

(Tanya is not amused, and you know what that leads to…)

Cheesecake variety pack

Pixiv: garter belts

This one leans a bit toward the NSFW side, for obvious reasons. I was honestly surprised I found as many SFW pics as I did, given the poses and camera angles generally involved in showing off garter belts and what they’re attached to.

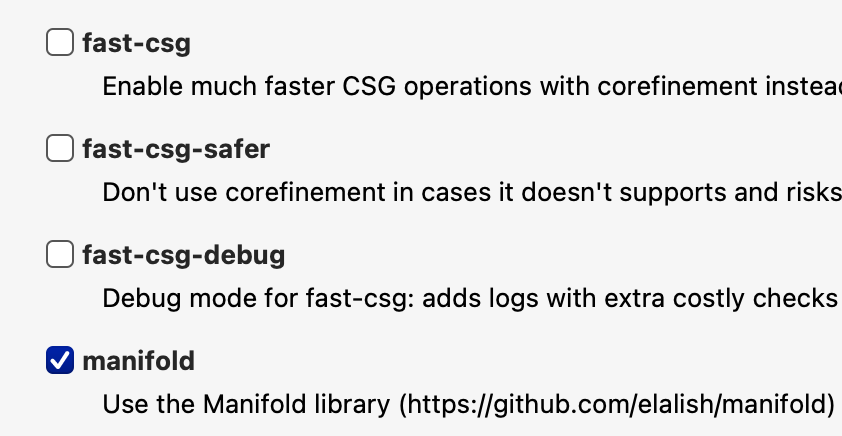

OpenSCAD goes zoom

I’ve been too busy to do any 3D printing recently (everything’s still in boxes in the garage from the move), so I hadn’t checked in on OpenSCAD, which is a wonderful tool afflicted with cripplingly slow rendering. Fixed now:

The new Manifold renderer is much faster and multi-threaded, allowing significantly more complicated models.

Sick Transit, USPS?

Pretty sure the two-day priority mail package that was shipped from Texas on Wednesday won’t be getting here on time. It made it to Cincinnati by Friday, about 45 miles away, but on Saturday it went to Des Moines, Iowa, over 600 miles away. On Sunday it rested. Also Monday.

It left Des Moines at 3:25 AM Tuesday for Milan, Illinois, and arrived/departed at 6:33 AM. Maybe it’ll turn up in Indianapolis next.

(I’d prefer to have all packages delivered by Kiki, but she’s not available in my area)

Garter time!

Cheesecake Vault, November 2018

I gave up whittling the list down after knocking out over 85% of the images I downloaded in this month. Still a very long list, but there’s a lot to like here.

Zelda Identification Fail

This popped up on Amazon, anticipating the upcoming release of the new game:

Um, no:

Wide base to prevent loss

Sadly, the plastic is brittle, and if you actually use them as snap-caps, the rim will break off and it will get stuck inside.

Inside the chamber, that is. I needed to jam a cleaning rod down the barrel to get my .22 revolver back open.

On with the cheesecake!

Pixiv: busty catgirls

Just to make sure I had enough… volume, I included both おっぱい and 巨乳 in my 猫耳.

Cheesecake Vault, October 2018

I wish to state for the record that I had never heard the word “actioner” before this past Sunday, when we found it in the back-cover blurb of the DVD for a movie made in 1996. I’ve an extensive movie library, and I have subscriptions to most of the streaming services, but this is the only time that I’ve ever seen a film described as an “actioner”.

On with the cheesecake action!

Pixiv: pushing buttons

There are a number of Pixiv tags that express the content in terms of what the viewer would like to do about it. A simple and fairly clean example is 指を突っ込みたいへそ = “belly-button I want to stick my finger into”. This of course brings to mind the old joke that ends:

“That’s not my belly-button!”

“That ain’t my finger, either!”