“I gave him a gun. I gave him a badge. I gave him the training. If he didn’t have the heart to go in, that’s not my responsibility.”

— Scott Israel, Chief Nosepicker, Broward County Sheriff's DepartmentGreen New Deal FAQ

… the short form.

Well, that explains a lot...

Jim Kirk and time travel: just say “no”.

Pixiv Champloo 3

So here’s an amusing Hugo note: if I don’t put anything above the fold, it won’t show the “more” button at all. So, blah blah blah cheesecake.

Dear US Customs Department,

Please update your Javascript validation code on the Global Entry application page to accept brand new passports issued during the current month.

Update

Works today. Someone must have noticed before I did, because I sincerely doubt that a government agency could make, test, and roll out a change to a web site on a Sunday night.

Now to see how long the queue is.

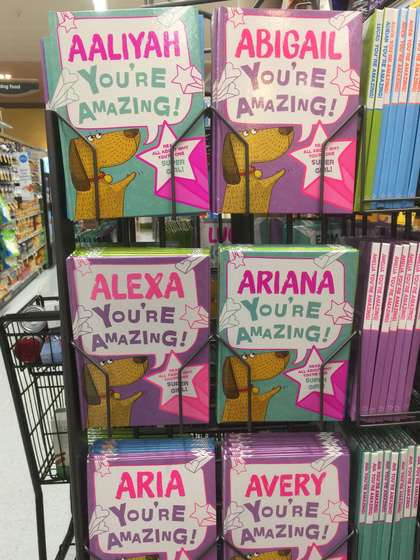

Dear Amazon,

Are you sure you’re allowed to advertise to kids this way?

Update

Grrr, I hate EXIF image-rotation and applications that don’t convert it to actual rotation on save.

Reminder for the next time I need this…

mogrify -auto-orient foo.jpg

(lossy, but quick for images I intend to resize down for the blog anyway)