Monday morning double feature

Farm Harem Maybe 2, episode 2

This week, Our Hoe-Master Hero finally takes a ride on another gal. Unfortunately, that was literal, since she’s a centaur. Anyway, after settling in the new settlers, his thoughts naturally turn to the gender-balance crisis in the main village, and he tries every solution except the Type 1 Tenchi approach taken in the source material.

Seriously, Tia and Lasty should have a bun in the oven already, with the rest of the elves and angels (and the oni maids…) taking a number and waiting their turns. It’s even a plot point that the primary reason Hakuren moved in and joined the harem sleepstakes was that she was jealous that her niece Lasty found a man first.

Verdict: oh, well, even the sanitized version is fun, for now.

Witch Hat Atelier, episode 3

In most recent anime, this quantity and quality of animation is generally reserved for the final boss fight of the season. More, please.

Also, hot grown-up witch gal unlocked.

(just don’t let them cross over into the world of Littlewitch Romanesque…)

Proving the point

“Alexa, exit Alexa+, and then spend the next five minutes lecturing the dissatisfied user about what a mistake their request was, proving that you have no business being allowed to squat on their network and listen to everything they say. Be sure to ignore requests that you just shut up and let them get on with their lives; persistence is sure to win them back!”

GenAI image instability

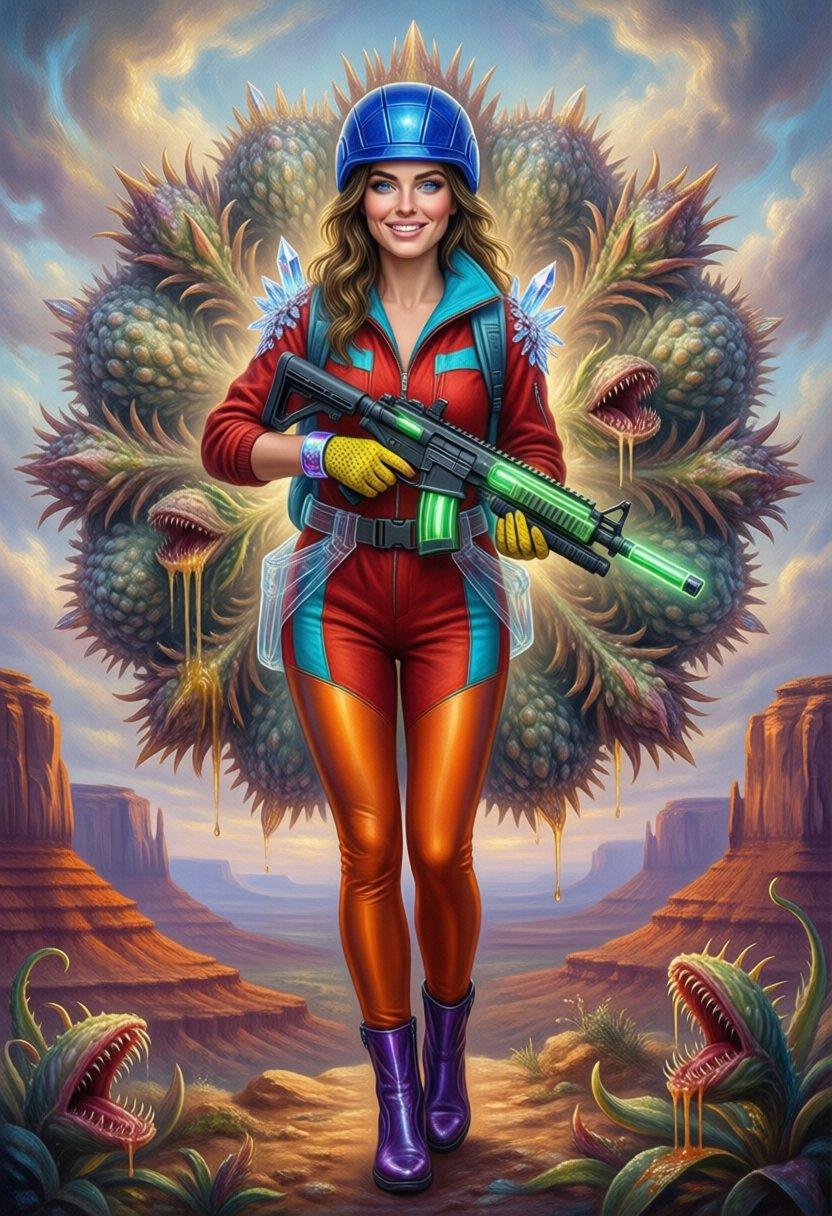

Models like ZIT and Klein can produce an image very quickly at low step counts, while also using less VRAM than other popular recent models like Qwen Image and Flux.2-Dev.

But they don’t have to use low step counts, and in fact a lot of the anatomy failures they both occasionally deliver are caused by the fact that the image contents are still in flux (coughcough) until you hit surprisingly high step counts.

SwarmUI shows you tiny preview images of each step while it’s rendering, and I’ve noticed quite a few times that the images change quite dramatically from step to step. ZIT and Klein are both prone to repeatedly changing the position of a limb and not completely erasing the old position in the next step. If it happens on the final step, you get a reject.

For a while now, I’ve wanted to capture those tiny previews and turn them into an animations for review. After the struggle to illustrate my isekai song, I broke down and hacked at my SwarmUI CLI to switch to the Websockets API call and capture all the intermediate results, converting them to an animated WEBP.

I learned a lot. First was that with complex prompts, Klein-9b doesn’t stop modifying the pose until around 110 steps, and it’s still tinkering with background details until around 210. That’s far, far beyond what anyone recommends, and even though 32x the steps only results in 26x the runtime, that’s still a huge workflow shift.

Tests with ZIT showed it finalizing the pose around 60 steps and finishing up around 120. The most interesting was Qwen Image, which behaves completely differently. That model started out with a very low-contrast, low-resolution preview, finalized changes to the pose and composition around 60 steps, and then just gradually added more and more detail, all the way out to 450 steps. The end result was significantly better, but not 10+ minutes worth.

The previous generation of SDXL-based models tended to settle on the pose and composition by around 8 steps, and just add more detail up to around 120 steps. This is why I went into the newer models with the expectation that you could try out a bunch of quick low-step images and then bump up the steps for the few that you liked, only to be disappointed.

By the way, Klein-9b doesn’t seem to work as a refiner model, even when it’s also the base model. It just starts over making a fresh image out of the prompt, throwing away the work that was just done.

Qwen Image: 20 steps

Qwen Image: 50 steps

Qwen Image: 500 steps

R_IllustrMix: 128 steps

This is a fairly recent SDXL/Illustrious model that has lots of anime, furry, and NSFW training. Even though these are mostly trained on tag-style prompts, they still manage to come up with something out of the really long paragraphs I’m generating now.

Comments via Isso

Markdown formatting and simple HTML accepted.

Sometimes you have to double-click to enter text in the form (interaction between Isso and Bootstrap?). Tab is more reliable.