Type

Automating PDF cleanup with Acrobat and AppleScript

As I mentioned earlier, I’m generating lots of PDF files that don’t work in Preview.app, and are also a tad on the large side. Resolving this problem requires the use of Adobe Acrobat and Acrobat Distiller. Automating this solution requires AppleScript. AppleScript is evil.

Just in case anyone else wants to do something like this from the command line, here’s what I ended up with, which is run as “osascript pdfcleaner.scpt myfile.pdf”:

on run argv set input to POSIX file ((system attribute "PWD") & "/" & (item 1 of argv)) set output to replace_chars(input as string, ".pdf", ".ps") tell application "Adobe Acrobat 7.0 Standard" activate open alias input save the first document to file output using PostScript Conversion close all docs saving no end tell tell application "Acrobat Distiller 7.0" Distill sourcePath POSIX path of output end tell set nullCh to ASCII character 0 set nullFourCharCode to nullCh & nullCh & nullCh & nullCh tell application "Finder" set file type of input to nullFourCharCode set creator type of input to nullFourCharCode end tell tell application "Terminal" activate end tell end run on replace_chars(this_text, search_string, replacement_string) set AppleScript's text item delimiters to the search_string set the item_list to every text item of this_text set AppleScript's text item delimiters to the replacement_string set this_text to the item_list as string set AppleScript's text item delimiters to "" return this_text end replace_chars

[I wiped out the file type and creator code to make sure that the resulting PDFs opened by default with Preview.app, not Acrobat; I swiped that code from Daring Fireball. The string-replace function came from Apple’s AppleScript sample site.]

PDF::API2, Preview.app, kanji fonts, and me

I’d love to know why this PDF file displays its text correctly in Acrobat Reader, but not in Preview.app (compare to this one, which does). Admittedly, the application generating it is including the entire font, not just the subset containing the characters used (which is why it’s so bloody huge), but it’s a perfectly reasonable thing to do in PDF. A bit rude to the bandwidth-impaired, perhaps, but nothing more.

While I’m on the subject of flaws in Preview.app, let me point out two more. One that first shipped with Tiger is the insistence on displaying and printing Aqua data-entry fields in PDF files containing Acrobat forms, even when no data has been entered. Compare and contrast with Acrobat, which only displays the field boundaries while that field has focus. Result? Any page element that overlaps a data-entry field is obscured, making it impossible to view or print the blank form. How bad could it be? This bad (I’ll have to make a screenshot for the non-Preview.app users…).

The other problem is something I didn’t know about until yesterday (warning: long digression ahead). I’ve known for some time that only certain kanji fonts will appear in Preview.app when I generate PDFs with PDF::API2 (specifically, Kozuka Mincho Pro and Ricoh Seikaisho), but for a while I was willing to work with that limitation. Yesterday, however, I broke down and bought a copy of the OpenType version of DynaFont’s Kyokasho, specifically to use it in my kanji writing practice. As I sort-of expected, it didn’t work.

[Why buy this font, which wasn’t cheap? Mincho is a Chinese style used in books, magazines, etc; it doesn’t show strokes the way you’d write them by hand. Kaisho is a woodblock style that shows strokes clearly, but they’re not always the same strokes. Kyoukasho is the official style used to teach kanji writing in primary-school textbooks in Japan. (I’d link to the nice page at sci.lang.japan FAQ that shows all of them at once, but it’s not there any more, and several of the new pages are just editing stubs; I’ll have to make a sample later)]

Anyway, what I discovered was that if you open the un-Preview-able PDF in the full version of Adobe Acrobat, save it as PostScript, and then let Preview.app convert it back to PDF, not only does it work (see?), the file size has gone from 4.2 megabytes to 25 kilobytes. And it only takes a few seconds to perform this pair of conversions.

Wouldn’t it be great to automate this task using something like AppleScript? Yes, it would. Unfortunately, Preview.app is not scriptable. Thanks, guys. Fortunately, Acrobat Distiller is scriptable and just as fast.

On the subject of “why I’m doing this in the first place,” I’ve decided that the only useful order to learn new kanji in is the order they’re used in the textbooks I’m stuck with for the next four quarters. The authors don’t seem to have any sensible reasons for the order they’ve chosen, but they average 37 new kanji per lesson, so at least they’re keeping track. Since no one else uses the same order, and the textbooks provide no support for actually learning kanji, I have to roll my own.

There are three Perl scripts involved, which I’ll clean up and post eventually: the first reads a bunch of vocabulary lists and figures out which kanji are new to each lesson, sorted by stroke count and dictionary order; the second prints out the practice PDF files; the third is for vocabulary flashcards, which I posted a while back. I’ve already gone through the first two lessons with the Kaisho font, but I’m switching to the Kyoukasho now that I’ve got it working.

Putting it all together, my study sessions look like this. For each new kanji, look it up in The Kanji Learner’s Dictionary to get the stroke order, readings, and meaning; trace the Kyoukasho sample several times while mumbling the readings; write it out 15 more times on standard grid paper; write out all the readings on the same grid paper, with on-yomi in katakana and kun-yomi in hiragana, so that I practice both. When I finish all the kanji in a lesson, I write out all of the vocabulary words as well as the lesson’s sample conversation. Lather, rinse, repeat.

My minimum goal is to catch up on everything we used in the previous two quarters (~300 kanji), and then keep up with each lesson as I go through them in class. My stretch goal is to get through all of the kanji in the textbooks by the end of the summer (~1000), giving me an irregular but reasonably large working set, and probably the clearest handwriting I’ve ever had. :-)

Dear Adobe,

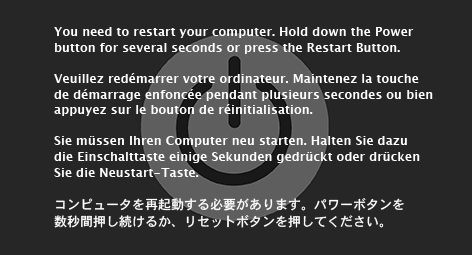

While preparing a faithful, high-resolution copy of the Mac OS X kernel-panic screen (to submit a patch to XScreenSaver’s BSOD module, now that JWZ has gotten it mostly working as a native Mac screen-saver), I ran into several problems. First, the result of my efforts:

Now for the problems. I started out working in Photoshop, mostly because I hate Illustrator and wish CorelDRAW 4 had been stabilized and ported to every useful platform, but quickly gave up. Even for a simple graphic like this, it’s just annoying to work without real drawing tools.

The power button took about fifteen seconds in Illustrator, leaving me to concentrate on the text (12.2/14.6pt Lucida Grande Bold and 13/14.6pt Osaka, by the way). The Japanese version took the longest, obviously, especially with the JPEG artifacts in my source image.

Mind you, the above PNG file wasn’t exported from Illustrator, because all of my attempts looked like crap. The anti-aliasing made the text too fuzzy. To produce a smooth background image with crisp text, I had to manually transfer the two layers to Photoshop. I exported the background graphic at 300dpi without anti-aliasing, resized it in Photoshop using the Bicubic Sharper mode, then created a text field, pasted in the text, set the anti-aliasing mode to Sharp, and nudged it into the correct position.

The real fun came when I wanted to take the text I’d so painstakingly entered and paste it into another application.

I couldn’t.

Selecting the text in Illustrator CS2 and copying it left me with something that could only be pasted into Photoshop or InDesign. Fortunately, InDesign was written by people who think that text is useful, and after pasting it there I could copy it again, ending up with something that other applications understood. See?

You need to restart your computer. Hold down the Power button for several seconds or press the Restart Button.

Veuillez redémarrer votre ordinateur. Maintenez la touche de démarrage enfoncée pendant plusieurs secondes ou bien appuyez sur le bouton de réinitialisation.

Sie müssen Ihren Computer neu starten. Halten Sie dazu die Einschalttaste einige Sekunden gedrückt oder drücken Sie die Neustart-Taste.

コンピュータを再起動する必要があります。パワーボタンを数秒間押し続けるか、リセットボタンを押してください。

Arcana Unreadable

Picked up a copy of Monte Cook’s Arcana Unearthed over the weekend, in case our group wanted to try it out sometime (D&D 3.5 went over like a lead balloon), and discovered that, while Monte may have learned a great deal from the rules mistakes in 3rd edition D&D, he has definitely not learned from the layout mistakes.

- the font is smaller, with a small x-height.

- the headers stand out less from the body text.

- the body font uses lower-case numbers (similar to web font Georgia, for those who aren't up on type jargon) so they blend in with the surrounding words.

- new sections still start in the middle of a column, so you have to hunt for things like character types.

- the index, while comprehensive, is set in italic sans-serif, so it's extremely hard to read.

- the index is also set with negative leading, so the page numbers in multi-line entries overlap slightly.

The only nice thing I can say compared to the WotC D&D books is that the page backgrounds aren’t crufted up with “spiffy” graphics, so you have black text on a white page. That high contrast, along with the generous leading, are all that saves it from complete unreadability. 3M Post-It Flags are all that can save it as a reference manual; you’ll never find anything quickly without them.

He does offer it as a PDF, which would be great if it weren’t for the tinyfonts. I suspect it would be quite readable blown up to fill a 20” widescreen display, but not on anything smaller. Blech.

Updates: I’ve found some more layout errors to be annoyed by.

Who thought this was a good idea?

I like Dean Martin. I picked up one of his albums on the iTunes Music

Store recently, and I’m glad to see that Capitol Records is

actively promoting

him once again. But what eagle-eyed halfwit thought that “light

gray on white” was an appropriate color scheme for body text,

especially at a size as small as 9px?

I apologize in advance to anyone who follows that link. Especially anyone old enough to remember Dino.

U&lc

Many years ago, a wonderful secret was passed on to me: U&lc was free. The printed magazine is no longer with us (and wasn’t even free at the end, although it was certainly worth its cover price). The web site is a pale shadow of the magazine, but still a good read.

Good article, bad HTML

David Brin usually writes interesting stuff (although I was horribly disappointed by his most recent Uplift novel). This article is no exception. Unfortunately, it looks hideous. Not only is the entire thing deliberately set in Helvetica Bold, it has medium-blue text on a light-gray background, no leading, and the lines are fully justified (something that’s rarely appropriate on the web).

For more fun, the text is broken up into pseudo-paragraphs by pairs of

tags, something I haven’t seen done in years. The only nice thing

I can say about the article, apart from the content, is that the lines

would be a comfortable length for reading if he’d used either a few

points of leading or a non-bold font.

More font madness

So I went to Apple’s support site to search the knowledge base, and couldn’t read a damn thing. My eyes were a bit tired, and the search results were displayed in 9.5pt Arial. Of the many preferences you can set when you log in with your Apple ID, legibility isn’t one of them. Gosh, I wonder what would happen if I searched for “Universal Access?”