Tools

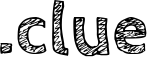

3D coffee update

I designed two new replacement tops for the Essenza Mini Mug Drip Tray, so I could leave it attached full-time and just replace the top when I want to switch from espresso/cappuccino cups to giant mugs. As a bonus, the tall piece sits nicely on my Keurig Elite as well, adding enough height to cut down on splashing while still holding my largest mugs stable.

The tall cover piece is a bit larger than the short one, so that it’s

wide enough for my largest mugs when used on the Keurig. Both should

provide a slight lip, but I’ve found that the slight roughness to the

print surface adds enough friction to counter the vibration. I

replaced my hacked-together drip hole with the nice teardrop() shape

from the BOSL library. It fills

the space nicely and looks cooler. I also went with a smaller number

of larger holes, to reduce the amount of flex. Everything’s still

designed for printing with 0.3mm layer-height, of course.

Update

Should be a published thingy now.

Joplin hack!

The CLI client for Joplin is very limited in functionality. It’s good for import, export/backup, and some very simple note-manipulation, but that’s all.

The mobile clients are mostly functional, provided you want all your notes sorted by title, created date, or updated date across all notebooks (per-notebook settings are a concept yet to be implemented on any client). The concept of dragging notes into a specific order is only supported on the desktop client.

…unless you’re willing to cheat, which I am. Using the plugin

API and code cribbed

from the Combine Notes

plugin, I

wrote a tiny little plugin that modifies the titles of the selected

notes so that they are prefixed with a string of the form “001# ”,

replacing any existing prefix. So, if you select all the notes in a

folder that you’ve arranged in a custom order, then when they

replicate to a mobile client, title-sort will preserve your order.

I’d make it a lot more robust and customizable before publishing it as an official Joplin plugin, but it meets my needs, and if anyone else thinks they’d find it useful, here’s Custom Order Titling.

import joplin from 'api';

import { MenuItemLocation, SettingItemType } from "api/types";

function zeroPadding(number, length) {

return (Array(length).join('0') + number).slice(-length);

}

joplin.plugins.register({

onStart: async function() {

await joplin.commands.register({

name: "CustomOrderTitling",

label: "Custom Order Titling",

execute: async () => {

const ids = await joplin.workspace.selectedNoteIds();

const prefixRegexp = /^\d{3}# /;

if (ids.length > 1) {

let i = 1;

for (const noteId of ids) {

const note = await joplin.data.get(["notes", noteId], {

fields: [

"title",

],

});

let strippedTitle = note.title.replace(prefixRegexp, "");

const newTitle = zeroPadding(i,3) + "# " + strippedTitle;

await joplin.data.put(['notes', noteId], null, { title: newTitle });

i = i + 1;

}

}

},

});

await joplin.views.menuItems.create(

"contextMenuItemconcatCustomOrderTitling",

"CustomOrderTitling",

MenuItemLocation.NoteListContextMenu

);

},

});

Two caveats:

-

I believe that the sync works on the complete-note level, so that updating even a single field like title replicates the entire note, but only the metadata and body text, not any attachments.

-

The order that you build up your selection of notes is the order the plugin will see them in. So, if you were to add them to the selection in random order, then the prefixes will be generated to match.

Turning customers into products...

I own exactly one wifi-connected wall plug. It controls the hot-water recirculation pump, so that it doesn’t just run 24x7, and it’s also Alexa-reachable if I want to turn it on mid-day.

This week, when I opened the associated app, it announced that real soon now it will require an account to continue working. Which means that WeMo wants to start collecting data about me to sell.

Which means that I’ll be e-wasting this product the moment that it demands I login for security updates or continued functionality.

Unrelated,

I installed the new version of MalwareBytes on my MacBook Air. It activated a trial of their premier service with real-time protection.

Not only did the palm-rest area of my laptop get quite warm, it caused

the pyenv shim command for python to take several seconds to run.

Since I use python --version to help set my shell prompt (letting me

know if it’s 2, 3, or some virtualenv), this was immediately quite

painful.

Suddenly I do not want to become a paying customer…

Dulling the shine

One for Rick C…

Overture PLA Plus/Pro is giving me a much less shiny top surface, at least in the dark blue (love the color, by the way).

Related, while using my heat gun to de-string a print, I noticed that it did a nice job of slightly dulling the extremely shiny finish that I get on the bottom from printing on glass+hairspray. I had it set to 350°F with the fan on high, and kept moving and rotating the parts to avoid melting anything thicker than strings.

Note that this is unrelated to the use of heat guns to restore smooth plastic finishes, which involves reducing the impact of UV and oxidation damage without sanding/polishing off the surface layer.

Oh, and What was I printing? A bag clip, of course. 😁

More specifically, this stl, scaled up from this clip by a designer at Prusa. I found the original wore out too quickly when used to secure twice-folded-over coffee bags, so I scaled it up (a bit more XY, a lot more Z) and printed at 0.3mm, with 5 walls so it printed solid without any infill pattern. The (quite mild) stringing came from testing Cura’s “smart hiding” of layer-start positions with a spool of filament that’s a bit thicker than the nominal 1.75mm.

(picture is unrelated)

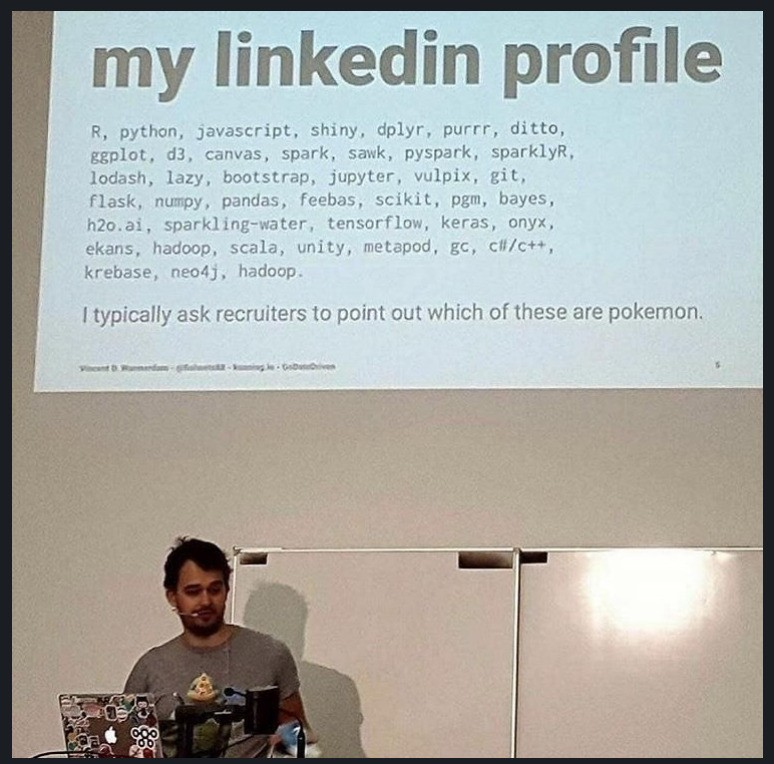

Don’t Be A Tool

In the grand tradition of using your 3D printer to print 3D printer accessories, quite a few people have designed little stands to hold all their 3D printer tools and published them to the various “search” sites. With few exceptions, they suck.

Common problems include:

-

I need seven inches or more: requires at least 180mm in at least two dimensions. My printer’s build area is 255x155x170.

-

Carve away anything that doesn’t look like an elephant: designed as a solid block of plastic with small holes for tools.

-

If your love life requires close air support, something has gone very wrong: requires significant supports to print successfully.

-

Hours will seem like days: all of the above contribute to ridiculous print times.

-

Where does the third one go?: assumes specific workspace layout (wall-mount, pegboard, attaches to one model of printer, etc).

-

One ring to rule them all: very-specifically-sized slots for every tool you could possibly need, not just the ones you actually use regularly.

There’s a pretty reasonable one designed specifically for the Dremel 3D45. Except for the part where it mounts to the right side of the printer, which isn’t where I use any of the tools.

File this one at Cults3D under “baffling” (even though it would fit nicely on my printer), because it has prominent storage for seven spare nozzles. Why? Not even “why do you need seven nozzles”, but “why do you have them all out on your workbench gathering dust in unlabeled bins?”.

Right now, I don’t want to print any of them, and I don’t want to spend the time to design my own, so my tool holder will continue to be a $5 box from Michaels. Maybe I’ll make a little organizer insert for it sometime, but honestly, I pretty much just use the scraper, flush cutters, emery boards, and a small sharp knife, and those fit on the lid of the box, with room left over for the calipers.

66%の誘惑

Next time, Nancy, maybe you shouldn’t buy your souvenir pens from China…

"That's the trouble with godhood: it robs you of your finer

judgement. A deity so rarely has to pay for his mistakes!”

"...while heroes... heroes have an infinite capacity for

stupidity! Thus are legends born!”

Nearly Topless

Thickness

I renamed my Cura 0.35mm layer-height preset from Coarse to Thick, because the Custom Printjob Naming plugin tries to abbreviate quality names to their initial letters, and if I have to choose between coarse and chunky, I’ll take chunky (this is not dating advice, but it could be). That makes the full set UAHGMOLTSC, which proves that I’m CACA (Crap At Creating Acronyms).

Mal-Adaptive

It seems Cura’s adaptive layer-height mode is experimental for a good reason. I printed a folding tablet holder that’s a good test of how well you’ve dialed in your filament settings, and it had a bunch of small holes in the top flat surfaces. Why? Because Cura calculated the number of top layers required for a 0.8mm surface based on the nominal layer height of 0.2mm, but because of nearby curved elements, printed them at 0.05mm instead. Four of those doesn’t make much of a surface. The stand is fully functional, just a bit moth-eaten on top.

This explains some other minor flaws I was running into with this feature, so I’ve stripped it out of my config set for now. UHGMOLTSC.

Dremelizing Cura, Chainsaw Edition

Here’s a tarball of my first pass at completely overhauling these Dremel 3D45 Cura configs. In order to test them side-by-side, I changed the vendor name in all the references from “Dremel” to “DR”.

The most frustrating aspect of creating this set was that when Cura

barfed on my configs, all it said in the logs was “Error when loading

container: ‘int’ object is not iterable”. Nothing useful like the

specific file or line number. The actual error is: machine definition

files can only have values in the form {"default_value": ... } or

{"value": ...}. I had copied actual values out of the material and

quality files as part of my effort to maximize the use of inheritance

and calculated values. And that’s a half-hour of my life I want back.

My primary motive for building these was being able to adjust acceleration, jerk, and speed, and have all the other related values get calculated relative to them. Dremel’s original configs didn’t do this, and the linked Github repo is pretty much copied directly from those, with ongoing cleanup.

My secondary motive was expanding the list of recommended quality settings. I’d added a bunch of different layer heights (0.15, 0.25, 0.35, 0.4, and adaptive), but they didn’t show up in the top-level menu; I always had to go into the custom view to select from them, and then I lost the convenient UI for infill and supports. Defining a whole new printer with custom settings fixed that, and also automatically keeps overrides (like z offset) when I switch settings.

As part of the fun, I tried to come up with names for the various quality settings that matched the scheme Dremel used. Theirs were Ultra (0.05mm), High (0.1mm), Medium (0.2mm), Low (0.3mm), and High Speed (0.34mm plus some speed overrides). I added Good (0.15mm), Okay (0.25mm), Coarse (0.35mm), Chunky (0.4mm), and Adaptive (0.05-0.35mm). All of them inherit my revised acceleration/jerk settings, so the estimated print times are within 5%.

Thicc Cam

Sticktoitivity

Accepted wisdom is that the maximum practical layer height for a given nozzle size is ~80% of its diameter, or 0.32mm for the common 0.4mm nozzles. I was tinkering with Cura’s adaptive layer height feature (which does not offer the customizability of PrusaSlicer’s, and is hidden away as an experimental feature), and it happened to generate some layers all the way up at 0.4. So I figured, what the hell, let’s try it on something that doesn’t have to be sturdy, and that would still work just fine if the top half fell off. Or even the top 2/3.

Turned out just fine, with some very light stringing on the inside of the tube that was easy to shave. Fits nicely, too. I’m sure there are plenty of models where 0.4mm layers wouldn’t work, but it’s a time-saver when it does.

(and, yes, if I want to really speed things up, MicroSwiss does make 0.6mm and 0.8mm nozzles for the 3D45, as well as replacement 0.4mm ones; all of them are also rated for abrasive filaments, something I’ve had no reason to try yet)

Related,

My miter box cam pin has finally been indexed on Thingiverse, and shows up both in searches and in my profile. It took two months.

Found a Zorkmid

Well, printed one, anyway, as a Cura test. Came out pretty decent, printed on edge on a raft.

Dremelizing Cura

Someone has work-in-progress configs on Github to get the current version of Cura to work with the Dremel 3D45. The basic functionality is there, but there’s no network or camera support, and the output quality is… poor (way too much retraction and stringing, and big gaps in pin-like elements). I’m happy with PrusaSlicer for now, but it’s nice to see someone working on more options.

Incidentally, I took a look at how Dremel got the network printing and webcam support into their customized old version of Cura. They heavily edited the UltiMaker 3 script to use their API, but it looks like they never got any kind of network discovery working. It would be faster to write a new Python plugin than to try to modify the current version of the UM3 code, especially since I’ve already got a working Bash script.

Quality update!

To no surprise, replacing the 6.5mm retraction with the 2-3mm that Dremel had in their version of Cura significantly improved the results. I can now do head-to-head comparisons between Cura 4.8 and PrusaSlicer 2.3, to decide which one handles specific parts better.

For my babydai koma, I find the overhang+supports quality unacceptable in both, so I’ve added some 0.5mm-wide manual supports to the model that can be easily snapped off. This forces both slicers to treat them as bridges, which significantly improves the results. And cuts the print time and filament use a bit.